The blog's voiceover was provided by ElevenLabs.

The guest had a thick accent, and one section needed re-recording for clarity—but they were in a different time zone and unavailable. In the past, I’d have delayed the release or settled for subtitles. Instead, I opened ElevenLabs, uploaded a short clip of the guest’s voice, cloned it in seconds, typed the corrected script, and generated a near-perfect audio take. It captured not just the words, but the subtle pauses, emphasis, and natural flow that made it indistinguishable from the real recording. I published on time, listeners noticed nothing unusual, and the episode got its best feedback yet.

That moment crystallized what ElevenLabs has quietly achieved by early 2026: voice AI isn’t a gimmick anymore—it’s becoming the default way we interact with technology. As their CEO Mati Staniszewski said recently at Web Summit Qatar, voice is emerging as the next major interface for AI, moving beyond screens and text to something more intuitive and human.

This isn’t hype. ElevenLabs just closed a massive $500 million Series D round in February 2026 at an $11 billion valuation (led by Sequoia, with Nvidia, a16z, and others doubling down). Their ARR crossed $330 million by the end of 2025, powering everything from Deutsche Telekom’s customer support to Revolut’s conversational commerce, the government’s citizen engagement, and even internal training at Fortune 500 companies (41% of them, by some counts).

So what makes ElevenLabs stand out in a crowded AI audio space? Let’s break it down.

The Core: Ultra-Realistic Text-to-Speech and Voice Cloning

At its heart, ElevenLabs is still best known for text-to-speech (TTS) that sounds eerily human. Their latest model, Eleven v3 (out of alpha and generally available as of February 2026), delivers:

- Lifelike intonation, emotion, and micro-pauses

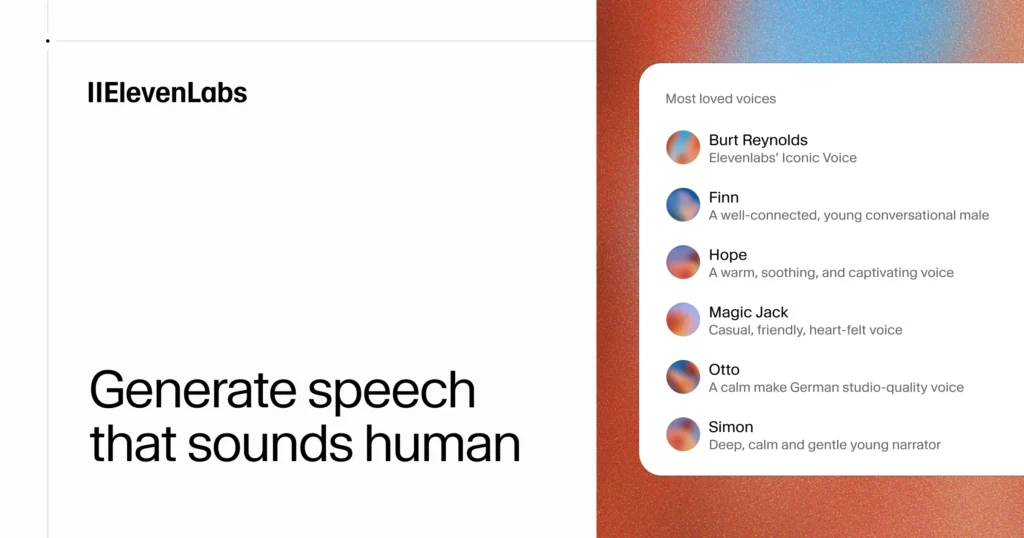

- Support for 70+ languages and thousands of voices

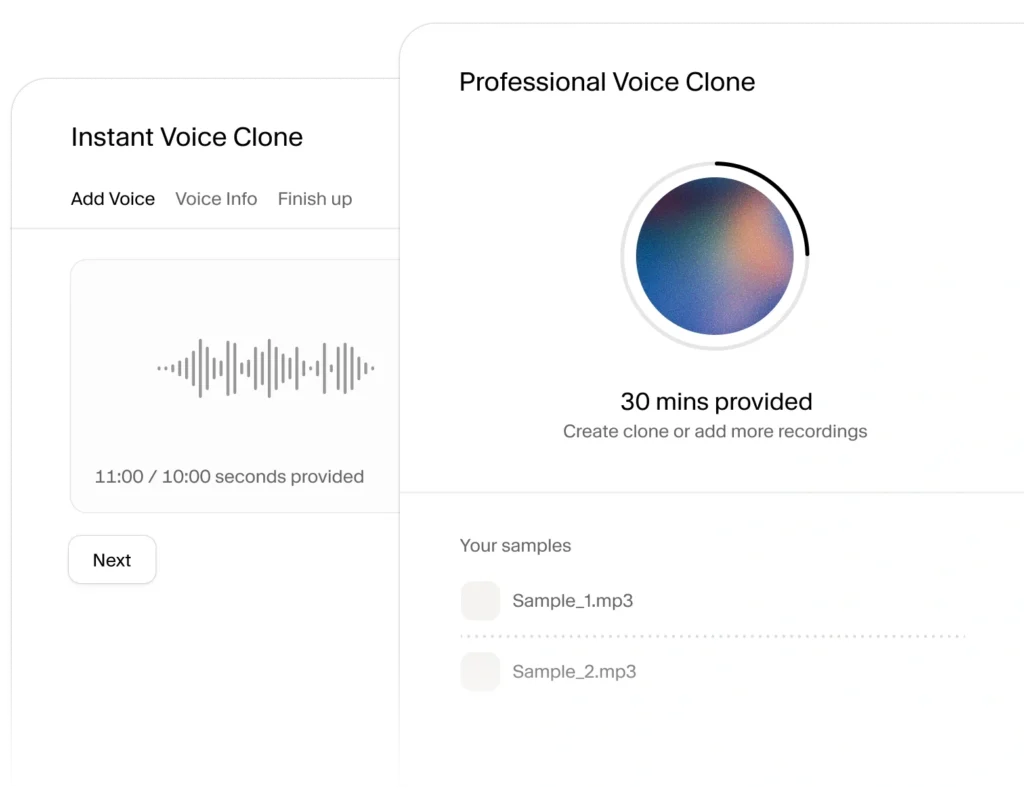

- Instant voice cloning from as little as 10 seconds of audio (though longer samples give even better results)

- Emotional direction via simple tags like [whisper], [shout], or [excited]

You can now direct the AI to act out emotions, control pacing, and add subtle breathing or filler sounds—things that make synthetic speech feel alive rather than robotic.

For creators, this means podcasts, YouTube narrations, audiobooks, video dubs, and ads can be produced faster and cheaper without losing authenticity. For businesses, it powers interactive voice agents that handle calls, support, sales outreach, and more.

Voice Agents: The Real Game-Changer in 2026

The biggest shift isn’t just better voices—it’s what you do with them. ElevenLabs’ ElevenAgents platform (heavily upgraded in early 2026) lets you build conversational AI agents that:

- Respond in real-time with low latency

- Understand context across turns

- Express emotion naturally

- Handle interruptions and proactive suggestions

Recent updates improved turn-taking (so the agent doesn’t talk over you) and expressiveness, powered by the new v3 Conversational model. Developers and enterprises are using this for:

- Customer support that feels like talking to a real person

- Sales bots that qualify leads via phone or voice chat

- Internal training simulations

- Citizen services (like government hotlines)

The CEO’s vision is clear: as AI spreads to wearables, cars, smart homes, and AR glasses, we’ll talk to our devices more than we tap screens. ElevenLabs is positioning itself as the voice layer for that future.

Practical Ways People Are Using ElevenLabs Right Now (2026)

From my own experiments and what I’m seeing in communities:

- Content Creators → Clone your voice for consistent narration across videos/podcasts, or generate multilingual versions of content.

- Businesses → Automate outbound sales calls, inbound support, or personalized onboarding messages.

- Developers → Integrate via secure APIs/SDKs into apps, games, or agents.

- Educators → Create interactive language lessons or read-aloud stories.

- Accessibility → Real-time dubbing or voiceovers for the visually impaired.

One fun side use: pairing ElevenLabs voices with short-form video tools (like the ones in our How to Create Viral Talking Object Videos with AI in 2026: Easy Step-by-Step Guide) to give animated characters ultra-realistic dialogue.

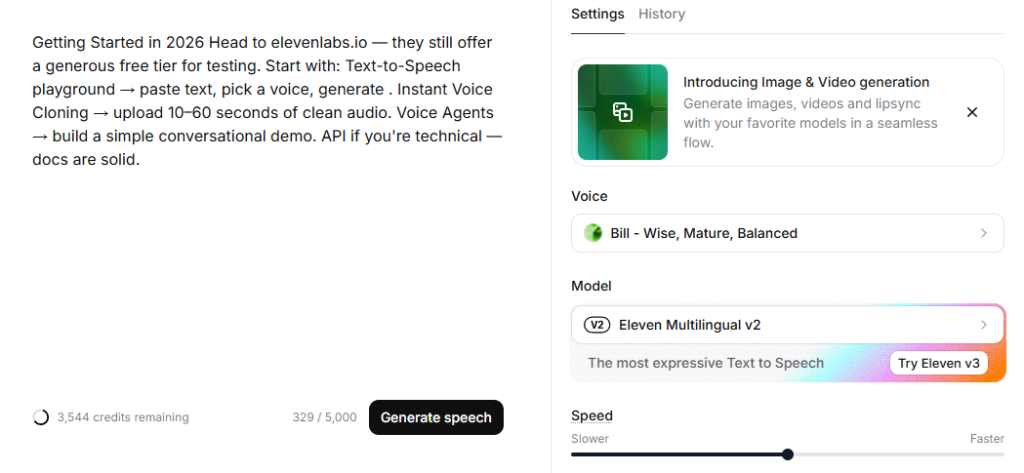

Getting Started in 2026

Head to elevenlabs.io — they still offer a generous free tier for testing. Start with:

- Text-to-Speech playground → paste text, pick a voice, generate.

- Instant Voice Cloning → upload 10–60 seconds of clean audio.

- Voice Agents → build a simple conversational demo.

- API if you’re technical — docs are solid.

Pricing scales with usage, but for creators and small teams, it’s become very accessible compared to 2024–2025.

Final Thoughts: Why Voice AI Feels Different This Time

In 2026, ElevenLabs isn’t just another TTS tool—it’s infrastructure for the post-screen era. When voice becomes the primary way we command AI, ask questions, shop, learn, and connect, the quality of that voice matters enormously. ElevenLabs nailed realism early, then doubled down on agents and enterprise scale.

If you’re creating content, running a business, or building products, voice AI deserves a spot in your toolkit. It’s no longer “nice to have”—it’s the interface coming next.

What are you building (or thinking of building) with voice AI? Drop your use case in the comments—I’d love to hear and maybe share tips.

For more on tying voice tools into bigger systems:

- From Inbox Chaos to Calm: How AI Email Agents Gave Me Back My Week in 2026

- How to Create Viral Talking Object Videos with AI in 2026: Easy Step-by-Step Guide

- Social Media Growth in 2026: Key Trends, Strategies, and Tools

- AI Guides & Tutorials in 2026: Building Scalable Systems with Automation, AI Tools, and Smart Workflows

Here’s to voices that sound human—even when they’re not. 🎙️

Leave a Reply